Hi, I'm Harshvardhan Sekar

Transforming complex data into actionable intelligence across Credit Risk, Healthcare, and AI.

About Me

Hi, I'm Harshvardhan "Harsha" Sekar, a Data Science and Machine Learning professional pursuing my Master's in Information Management at the University of Illinois Urbana-Champaign. Previously, I worked at HSBC as a Decision Science Analyst in Credit Risk, where I worked on IFRS9-compliant loss forecasting models and automated reporting pipelines for mortgage portfolios exceeding $18B. My background bridges finance, data engineering, and applied machine learning, translating complex data into actionable insights that drive smarter, risk-aware decisions.

At Illinois, I've expanded my focus to Generative AI and Large Language Models, developing systems that blend language, vision, and retrieval-based reasoning. My recent work includes a Retrieval-Augmented Medical QA Agent using T5, BioBART, and SBERT, and a Multimodal Comics Understanding Model leveraging OpenFlamingo, LLaVA, and Stable Diffusion. I'm passionate about building ethical, grounded, and scalable AI systems that enhance how people interact with information — from credit modeling and healthcare analytics to intelligent, trustworthy LLM applications.

My Projects

Medical Question Answering Agent using MedQuAD

Built a supervised NLP pipeline leveraging MedQuAD dataset (47K+ NIH-sourced Q-A pairs) to enhance reliability in medical question answering. Integrated transformer models (T5, BioBART, PubMedBERT) with dense retrievers (SBERT, ColBERTv2) in a Retrieval-Augmented Generation architecture, improving factual accuracy for domain-specific healthcare applications.

CECL Credit Risk Analytics — LendingClub Portfolio

End-to-end CECL-compliant credit risk platform for a $11.7B LendingClub consumer lending portfolio (1.35M loans, 2007-2018). Developed PD scorecard (AUC 0.693) and XGBoost ensemble (AUC 0.720, 50 SHAP-selected features), two-stage LGD (MAE 7.6%), and EAD models. Built synthetic monthly panel (31.4M rows) for institutional-format receivables tracking and forward default flow rates across 7 DPD buckets. Implemented 3-scenario macro stress testing with multi-quarter UNRATE paths, producing probability-weighted ECL across Pre-FEG, Central, and Post-FEG views ($2.9B Post-FEG, 64.6% ECL variance under stress). All population stability indices GREEN (PSI < 0.04).

Oscar Award Winner Predictions

Designed a comprehensive analytics and machine learning pipeline to predict Oscar-winning films and Emmy-winning TV shows using audience metrics, critic ratings, and production metadata. Integrated data engineering, predictive modeling, and visualization in a single workflow combining Tableau Prep, Python ML models, and Power BI dashboards.

Pneumonia Detection using Computer Vision

Built a four-class deep learning classifier to detect Normal, Bacterial Pneumonia, Viral Pneumonia, and COVID-19 from chest X-rays using transfer-learned architectures (VGG-16, DenseNet-201, Xception, EfficientNet-B0). Curated multiple open-source CXR datasets into a unified training pipeline with preprocessing, data augmentation, and transfer learning from ImageNet weights. The best model (VGG-16) achieved ~90% accuracy with robust differentiation between pneumonia subtypes.

Integrating AI to Optimize the Brisbane 2032 Olympic Games

Developed a consulting-grade strategy using McKinsey's 7-Step framework to integrate AI technologies — including Digital Twins, Predictive Analytics, and Smart City Systems — to enhance sustainability, reduce costs (~A$300M), and lower carbon emissions (~8,000 tons CO₂e) for the Brisbane 2032 Olympic Games. Combined cost-benefit modeling, vendor benchmarking, and an implementation roadmap aligned with IOC sustainability targets, delivering a phased rollout strategy for AI-powered venue optimization, energy management, and operational efficiency.

Netflix Data Warehouse with Metadata Validation

Built a scalable Snowflake data warehouse solution to ingest, clean, and validate Netflix catalog metadata using Python preprocessing and OMDb API verification. Validated runtime/duration data for 7,787+ titles, identifying and correcting ~27% with inconsistencies (e.g., 1-minute TV shows, 6-minute movies). Implemented ELT pipelines with star schema dimensional modeling to improve data accuracy for recommendation systems, catalog quality assurance, and high-performance analytics.

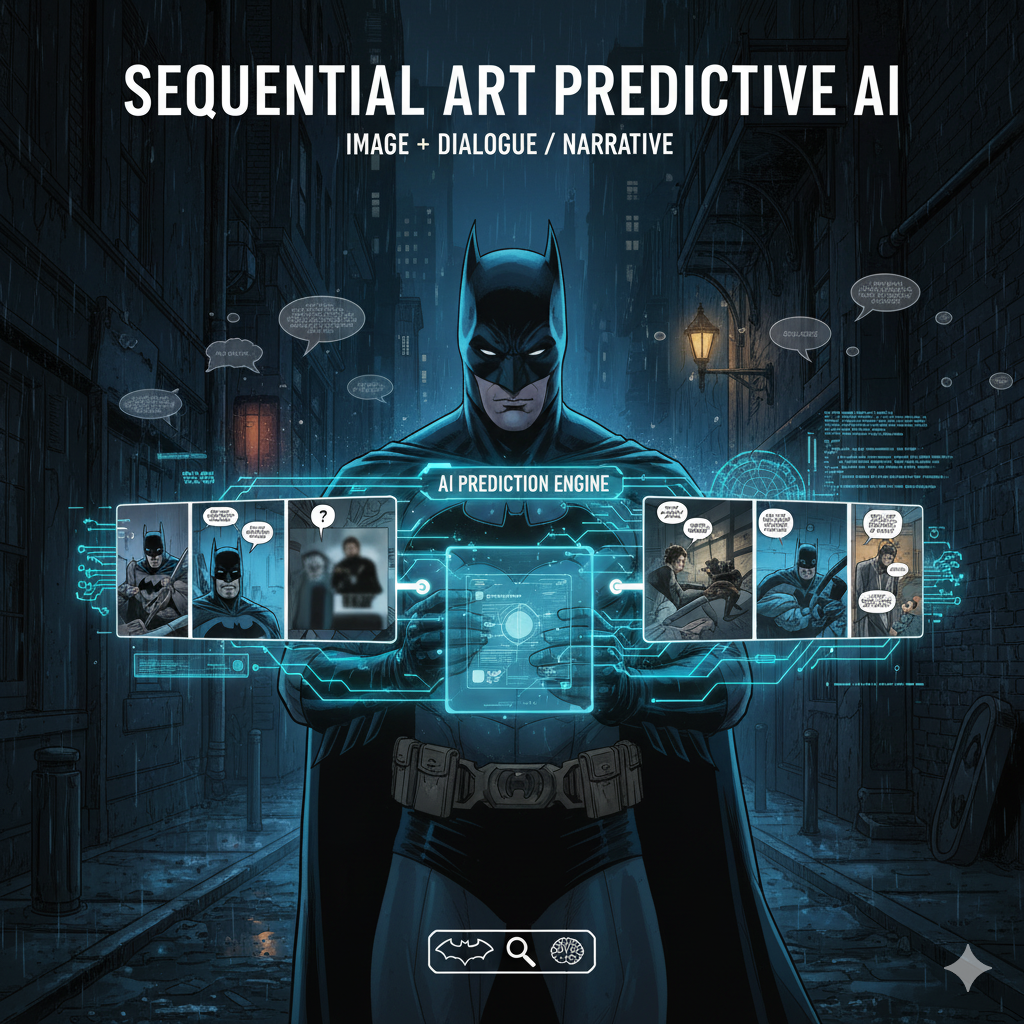

Multimodal Cloze in Comics using Vision-Language Models

Built an end-to-end vision-language modeling pipeline to perform closure—predicting missing dialogue and generating corresponding artwork between comic panels. Leverages LLaVA, OpenFlamingo, and Stable Diffusion to jointly reason over text and imagery from the COMICS dataset (1.2M panels). Includes preprocessing with YOLOv8 for panel segmentation, CRAFT for text balloon detection, and EasyOCR for dialogue extraction, enabling multimodal narrative understanding and AI-assisted comic creation.

Experience & Education

Master of Science in Information Management

University of Illinois at Urbana-Champaign

Decision Science Analyst

HSBC - Loss Forecasting & Credit Decision Modelling

Bachelor of Technology in ECE

National Institute of Technology, Tiruchirappalli

Skills & Certifications

Programming

ML & AI

NLP & LLMs

Cloud & Data

Databases & BI

Risk Frameworks

Quantitative Modeling

PD/LGD/EAD development, loss forecasting, macro stress testing, and regulatory capital modeling.

Machine Learning

Supervised and unsupervised learning, ensemble methods, deep neural networks, and model interpretation.

NLP & LLMs

Transformer architectures, retrieval-augmented generation, fine-tuning, and prompt engineering.

Data Engineering

ETL/ELT pipelines, data warehouse design, cloud migration, and big data processing.

Get In Touch

Have a question or interested in collaborating? Feel free to reach out!